立体视频的4维矩阵DCT编码算法

【摘要】 基于4维矩阵DCT变换理论提出了一种有效的立体视频编码方法。通常的方法是对每个立体对都进行视差估计和运动估计,该的方法采用4维矩阵DCT变换去除双目图像数据间的冗余,因此只需进行三分之一的运动估计而不需做任何视差估计。第一个立体对被称为“I 立体对”,接下来的每三个相邻的立体对被称为一个“P 立体对”,并且以4维单元的形式进行保存。仅对“P立体对”的中间时刻的块进行运动估计,中间时刻的运动矢量被视为4维单元的统一的运动矢量来计算运动补偿。然后,用4维矩阵DCT变换进一步的去除时域和空域上的冗余性。实验结果表明在计算复杂度大大降低的情况下该方法可以得到较好的编码性能。

【关键词】 立体视频编码; 立体对; 4维矩阵DCT变换; 4维单元

As early as in 1928, John Logie Baird in Great Britain as well as Paul Nipkow in Germany mentioned stereoscopic television[1]. However, since then, few people paid attention to this issue. During the last 5 years the situation has changed, stereoscopic television has again become more important in both research and industry. This is related to the advances in camera technology, computing power, rendering technology and 3D displays. Stereo video can provide users 3D scene perception by showing two frames to different eyes simultaneously, so it has wide application in many fields, such as 3D television, 3D video application, robot vision, virtual machine, medical surgery and so on[2]. Although stereo video is attractive, the amount of video data and the computational complexity is doubled. A good coding system is required to solve the problem of huge data with limited bandwidth.

Coding schemes for stereo video have to be adapted to the selected scene representation. One type of such data is polygonal 3D points and connectivity. Typically these are stored and transmitted in text format being a tremendous waste of capacities. ISO MPEG has recognized the importance of efficient 3D mesh compression and included a tool called 3D Mesh Compression (3DMC) for static meshes exploiting spatial dependencies of adjacent polygons in MPEG-4[3]. The common system is a straightforward solution, which encodes all the video signals independently using a state-of-the-art video codec such as MPEG-2/H.264[4], or wavelet[5]. These stereo video systems refer one of the stereo video sequences as the main sequence and encodes it by the MPEG-2/H.264 video coding standard in high visual quality, and refer the other sequences as auxiliary sequences and predict them both from the main sequences using DC, and from the previous auxiliary ones using MC[6-10].

The main principle of image compression techniques is to remove data redundancy, which is inherent in spatial and temporal data correlation. Instead of calculating both DE and ME of every stereo pairs, we proposed in this paper a novel method based on 4D-MDCT theory. Only of the every third ME and none of DE calculation is needed, the computation complexity is reduced greatly.

The rest of the sections are organized as follows. Section 1 describes the proposed stereo video coding system. Experimental results are shown in Section 2. Finally, Section 3 gives the conclusion.

1 Proposed stereo video coding system

In the proposed method, only of the every third ME should be calculated, compared to the traditional algorithms computing every stereo pair by both DE and ME. Then 4D-MDCT was used to eliminate the spatial and temporal redundancy further.

According to the characteristics of HVS, a quantization table based on 4D-M was generated and used. Then, the transformed coefficients were scanned according to difference modes and encoded by context-based adaptive variable length coding[4].

1.1 Group of stereo pairs

A group of stereo pairs described in this paper was consisted of one “I stereo pair” and three “P stereo pair”. In Fig.1, the first stereo pair was considered as “I stereo pair” and each next adjacent three stereo pairs were referred to as “P stereo pair”. The left frame, right frame of the stereo pairs were labeled as L and R, respectively, and the width and height of each stereo frame were described as X and Y, respectively. Only the frames in middle time of “P stereo pair” were calculated by ME from the former reconstructed frames.

As shown in Fig.1, the first “P stereo pair” consisted of T=k+1, T=k+2 and T=k+3. Shadow blocks in the first “P stereo pair” were taken into a uniform 4DU, which could be described as PI×J×2×3= [aijck]I×J×2×3 in 4D Matrix. The four dimensions of 4DU are image width, image height, stereo video channels and adjacent three frames in each channel, respectively. ME was only calculated between the blocks in T=k+2 and T=k. MVk+2 is the motion vector of T=k+2, but we referred it to be the uniform MVs of the shadow 4DU in the first “P stereo pair”, which could be described as MVs=MVk+2. Then MC of the shadow 4DU was calculated by this uniform MVs from the former reconstructed frames. So the computation complexity of ME could be greatly reduced.

Fig. 1 A group of stereo pairs

1.2 Architecture of 4D-MDCT based stereo video encoder Fig. 2 shows the architecture of 4D-MDCT based stereo video encoder.

In Fig. 2, Ik represented “I stereo pair”, which T=k. Pk+n represented “P stereo pair”. F′ represented the reconstructed reference frame. D was residual information, and D′ was consisted of some error with D. X represented the coefficient information after transform and quantization.

Fig.2 Architecture of 4D-MDCT based stereo video encoder

As shown in Fig. 2, Ik was encoded by intra prediction firstly. The reconstructed “I stereo pair” could be a reference to construct the others.

Pk+n were encoded by both intra prediction and MC. The MVs of middle time blocks in Pk+n were considered as the uniform MVs of the 4DUs in Pk+n, then MC were calculated with these MVs between Pk+n and F′.

After prediction, the residual coefficients (D) were transformed by 4D-MDCT (T), quantified by 4D matrix quantization (Q), reordered (Z) and encoded by context-based adaptive variable length coding (VLC) finally. MVs were encoded by Huffman coding.

1.3 Intra prediction mode

As in standard H.264[4], 9 optional intra prediction modes were used for small size of 4DUs, which were P4×4×2×3=[aijck]4×4×2×3. And 4 optional modes were used for larger size of 4DUs, which were P16×16×2×3=[aijck]16×16×2×3. The intra prediction modes of each plane of 4DUs are shown in Fig. 3 and Fig. 4.

Fig. 3 Intra prediction modes in plane for

smaller size of 4DUs

Fig. 4 Intra prediction modes in plane for

larger size of 4DUs

1.4 Motion compensation

According to that the difference between two frames in temporal domain is tiny, we only calculate ME between the mid time in “P stereo pair” and the previous reconstructed reference frame. The three steps search (TSS) method was used, and the search criterion was mean absolute error (MAE).MAE= 1 2MN?1 c=0?M-1 m=0?N-1 n=0|Sk+2(i,j,c)-

Sk(m+i,n+j,c)|. (1)The search window sized M×N, S represented intensity, c represented channel and k represented time.

1.5 4D-MDCT theory

4D-MDCT is expanded from 2D-DCT, especially the third and forth dimensional transform can fully eliminate the spatial and temporal redundancy of the coefficients. After 4D-MDCT, the coefficients are compacted not only in the frequency domain but also in the temporal direction. The 4D-MDCT transformation matrix is expressed as Eq.(2).BM= [bu,v]M×M=

2 Mcos uπ(2v+1) 2M, u≠0,

1 M, u=0.(2)Where, M is the order of the transformation matrix, u and v are subscript variables of the element in the transformation matrix.

The forward transformation of the 4D-MDCT is expressed as Eq.(3).Csub=((((AsubB4)1B4)2B2)3B3)4.(3) The inverse transformation of 4D-MDCT is expressed as Eq.(4).Asub= ((((CsubB-13)4B-12)3B-14)2B-14)1=

((((CsubBT3)4BT2)3BT4)2BT4)1.(4)Where Asub is the suatrix after partition of matrix A. Csub is the new matrix which is generated by applying 4D-MDCT to Asub[11].

1.6 4D-M quantization

According to the characteristics of the HVS, eyes are sensitive to the change in lower frequency than the higher, and a stereo pair with one sharp image and another blurred image can stimulate appropriate visual perception[12-14], quantization table was generated. The quantization step was formed correlation with its position in the 4D matrix, corresponding to the lower frequency, and the left channel are smaller than the others[11]. If the coefficient in 4DU was aijck, the quantization step was described as Eq.(5).QI×J×C×K=sinπ 2×dis(ξ) dis(Ζ)×QW.(5)Where, QW is quantization weight, which is a constant. The position of the coefficient aijck is represented as vector ξ, which is given by Eq.(6).ξ=(i,j,c,k)T.(6) The dis(ξ) is the distance of ξ:‖ξ‖=(ξ,ξ),(7)Z represents the position of the highest frequency. Assume matrix CI×J×C×K= [cijck]I×J×C×K

andDI×J×C×K=[dijck]I×J×C×K,are coefficient matrixes before and after quantization, then:DI×J×C×K= CI×J×C×K / QI×J×C×K.(8)1.7 Reorder and variable length coding

After transform and quantization, most of the high-frequency coefficients were zero. But the distribution of non-zeros was diverse. Due to non-zero coefficients tends to assemble not only in the lower frequency position but also in time direction, we could check up the sum of the coefficient plane from the last time to the first time and from the right channel to the left one and then skip the all-zero plane, scan the remaining planes.

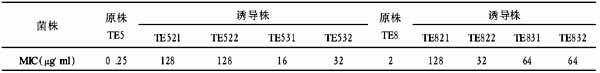

There were 8 scan modes for 4DU. The scan mode and the remaining non-zero planes are given in Table 1. Table 1 Scan mode and remaining non-zero planes

Mode Non-zero planes Mode Non-zero planes

0 Null 4 Pl0,Pr0,Pl1

1 Pl0 5 Pl0,Pl1,Pl2

2 Pl0,Pr0 6 Pl0,Pr0,Pl1,Pl2

3 Pl0,Pl1 7 Pl0,Pr0,Pl1,Pr1,Pl2,Pr2

In table 1 Plk represented the kth left frame was non-zero plane, other wise Prk meant the kth right frame was non-zero plane, where k=0, 1, 2.

The 4D zigzag scanning was extended from the 2D zigzag scanning. Scanning order was from low frequency to high, from the left to the right, from the first to the third frame.

After reordering scan, the coefficients were more compact assembled in a 1-dimensionl array. Thus, we used CAVLC to encode the coefficients and used the Huffman coding to encode the MVs.

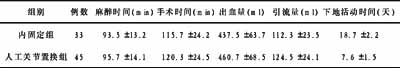

2 Experimental results

The proposed algorithm was tested by Booksale and Crowd sequences which were provided by Carnegie Mellon University. Each of the stereo sequences is available with 90 and 170 frames, respectively. The size of each frame is 320×240. The luminance frames of these stereo sequences were used in our experiment. The objective quality measure of the reconstructed images is peak-to-noise ration (PSNR).

Fig. 5 and Fig. 6 showed the average compression results of right P frame for Booksale and Crowd between the method proposed in this paper and IMDE (interpolated motion and disparity estimation), EIMDE (enhanced IMDE), whose left and residual right frames are decomposed using a DWT[2].

Fig. 5 The right P frame for Booksale sequence

Fig. 6 The right P frame for Crowd sequence

From Fig. 5 and Fig. 6, we could see that the proposed algorithm provides gains over IMDE and EIMDE in both Booksale and Crowd sequences. Specifically, the computational complexity was decreased greatly.

Fig. 7 (a) and (b) were the 26th original stereo pair of Booksale. Fig. 8 (a) and (b) were the 26th reconstructed stereo pair of Booksale by the method based on 4D-MDCT proposed in this paper.

Fig. 7 The 26th original stereo pair of Booksale

Fig. 8 The 26th reconstructed stereo pair of Booksale

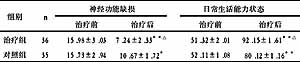

Fig. 9 showed the average compression results of P frame for Crowd between the method proposed in this paper and Joint H.264[15], which is based on JM8.5 model and joint with DE and ME.

Fig. 9 P frame for Crowd sequence

From Fig. 9 we could see that above 0.53 bpp 4D-MDCT had higher reconstructed image quality, but at very low bitrate H.264 did well. As we all known, H.264 consisted of many excellent and complex technologies, such as half-pixel prediction, rate-distortion optimizing. In our future work, we may also adopt these techniques to get better performance of our method.

3 Conclusion

The method proposed in this paper was based on the theory of 4D-MDCT. Instead of calculating both ME and DE of every stereo pairs, only frames in mid time of “P stereo pairs” were calculated by ME. The MVs of blocks in mid time of “P stereo pairs” would be referred to as the uniform MVs of the 4DUs. The MC was calculated between “P stereo pairs” in 4DUs and the reconstructed reference frames. And then 4D-MDCT was used to eliminate the spatial and temporal redundancy further,which decrease the computational complexity. Experimental results demonstrated that the proposed schemes can provide better performance with reduced computational complexity.

【】

[1] Tiltman R F. How “Stereoscopic” Television is Shown, Radio News, 1928.

[2] Ellinas J N, Sangriotis M S. Stereo video coding based on quad-tree decomposition of B-P Frames by motion and disparity interpolation [J]. IEE Proc-Vis Image Signal Process, 2005, 152(5): 639-647.

[3] ISO/IEC JTC1/SC29/WG11. Information Technology-Coding of Audio-visual Objects [S]. Part 2: Visual; 2001 Edition, Doc. N4350, Sydney, Australia, 2001.

[4] ITU-T Rec. H.264 / ISO/IEC 11496-10. Advanced Video Coding. Final Committee Draft [S]. Document JVTE022, 2002. [5] WAN W B, SHI P F. Hybrid fractal and wavelet coding for image compression [J]. Chin J Stereol Image Analysis, 2002, 7(3):178-181.

[6] Ding L F, Chien S Y, Huang Y W, et al. Stereo video coding system with hybrid coding based on joint prediction scheme [J]. IEEE, 2005, 8(5): 6082-6085.

[7] Luo Y, Zhang Z Y. The frame estimation algorithm of stereo video sequences based on multi-resolution quadtree-based motion segmentation and overlapped blocks [J]. J Image Graphics, 2002, 7(7): 716-722.

[8] Nordling V. Efficient Compression of Stereoscopic Video Using the MPEG Standard [D]. Stockholm, Sweden: Royal Institute of Technology, 2003.

[9] Thanapirorn S, Fernando W A C, Edirisinghe E A. A zerotree stereo video encoder [J]. IEEE, 2003, 3(3):608-611.

[10] Yang W, Ngi N K. MPEG-4 based stereoscopic video sequences encoder [J]. Proc.ICASSP, 2004, 5(3):741-744.

[11] Du X W, Chen H X, Zhao Z J. 4D-MDCT based adaptive prediction and compensation color video coding [A]. 7th International Conference of Signal Proceedings [C]. Beijing,2004. 7:1131-1134.

[12] Pei S C, Lai C L. Very low bit-rate coding algorithm for stereo video with spatiotemporal HVS model and binary correlation disparity estimator [J]. IEEE J Selected Areas Communications, 1998,16(1): 98-107.

[13] Sethuraman, S. Stereoscopic Image Sequence Compression Using Multiresolution and Quadtree Decomposition Based Disparity and Motion-Adaptive Segmentation [D]. Pittsburgh, Pennsylvania: Carnegie Mellon university, 1996.

[14] Stelmach L, Tam W J, Meegan D, et al. Stereo image quality: effects of mixed spatio-temporal resolution [J]. IEEE Trans Circuits Sys Video Technol, 2000, 10(2):188-193.

[15] Li S P, Jiang G Y, Yu M. Approaches to H.264-based stereoscopic video coding [J]. Computer Eng Appl, 2005, 1:77-79.